After spending a few years in the FMCG sales role, I moved to a marketing role. Being the junior most in the marketing team, most of my time was spent managing projects. However, sometime I would also get to assist the seniors in framing the communication route and test them with the consumers. I always found these sessions of ad testing intriguing. You show users a set of images and text, note their reactions, probe further and make a judgment. After this go back to the board, incorporate users’ feedback and show them to the users again. This cycle was followed until we had no more new insight. We would also use this format for ad testing the final output. Show users the print ad, sometimes even sticking them in Newspapers to simulate the real world. Any user feedback at this stage was more or less useless. We had already completed the shoot or were precariously close to the campaign deadline. Any changes would attract the wrath of finance as well as the seniors in the marketing team. The situation would get worse if we were testing the final video ad. While small changes could get incorporated but if the changes were significant, the best would be to debate with the users and make them like it.

There were few other issues that always bothered me. The users who had to give feedback hovered around very rationale reasoning. Few common feedback would be “Will surely buy if the product is cheaper”, I wish the detergent could make my clothes bright and at the same time smells good” But then you go back to reality and see that competition launched a product at a premium and people are still queueing to grab one!

Then there were experts who could cut through the random feedback clutter and tell you what exactly the consumers meant when they gave feedback to your ad. They could decipher body language, put users to further scrutiny and then come back with feedback which was more specific. While it did not solve the problem of how to incorporate those changes in the ad that had already been created, but is solved the issue of knowing possibly what part of the ad, viewers did not like. One could safely guess the users’ response to seeing the ad. But this format came up with another issue. COST! Hiring these experts would cost a bomb and we had strict instructions on when to engage them. More often than not, these experts had to be excused. Experts like Millward Brown and AC Nielsen were reserved for brands and campaigns with large spends.

Then came the shocker. Even after all these tests and experts there we shocking cases of marketing failures. Marketers just failed to understand what would resonate with consumers. Pepsi’s Bleed Blue too Tata Nano, marketing world is full of such cases.

All through these processes, I cringed to have a methodology of ad testing which was simpler, effective, faster and cheaper.

A quick summary of things that I wanted to change.

- Time taken – It takes time to take an ad to users, get their feedback, make changes and then retest. Time is also lost in making those changes to the ad. In the case of video ads, these changes might be as good as creating a new video.

- Expensive – Each touchpoint with the consumer to test the ad, making changes to the ad and then retesting costs a lot of money. As explained earlier, concepts and image ads are comparatively cheaper to recreate, but video and audio ads involve a lot of man-hours and money.

- Lack of expertise – Just showing an ad to the user and asking if they liked it or not, does not solve the purpose. It’s like knowing something is wrong but not knowing exactly what is wrong. How is a marketer supposed to react?

And then something interesting happened. My marketing boss hired a fresh engineering graduate (Let’s call him Larry) to handle online advertising. Online advertising during those days was confined to Google and FB. The rookie had no prior marketing experience and was hired for his skills in Microsoft Excel! Larry turned out to be a soothsayer. Without going to any of the ad testing consumer focus groups, Larry would tell you which ads would appeal to the users and which would not. He could also tell the team with decent accuracy which tag lines would be a hit and which would fail. And that’s when I decided to be the Sherlock Homles. Uncover how Larry could test the ads within no time, money or expertise. My adventure turned out to be a damp squib. What Larry was doing very simple. Let me elaborate on this:

How to test text, taglines, and call to action

Larry would create a series of text ads using Google’s text ad creator. These ads would be a permutation and combination of all possible variants changing one variable at a time. Now create a test campaign and place all the ads in a single ad group. With a minimal budget, Google would test all the ads and give performance results of each. A handy report tells you what is the likely hood of someone clicking on these ads. The report doesn’t stop there. A marketer can also ascertain what would the guys clicking on these ads do once they click (Quality)

With millions of advertisers running their ad campaigns across the world, best practices and performance benchmarks are easily available to improvise.

How to test Video, Audio and Image ads

Google comes handy again. Instead of running a text ad on the search platform once should use display ad type. Measurement methodology remains the same. YouTube will tell you which video ad people viewed completely, at which point people skipped the ad, are people clicking on the mentioned call to action. Similarly, image display ads can be used to measure the likeability of ads which in turn comes from how many people are clicking on the ad. This number when compared with the benchmark for your industry provides how good or bad the ad is.

In many cases, YouTube can also be used to estimate the lift in awareness. Termed as Brand Lift study, YouTube measures the increase in awareness, likeability and purchase intent by running a survey. The survey is run between two groups. Group 1 is the group that has been shown the ad. The second group is the group of people who have not been shown the ad. The difference in response is then used to estimate the increase in awareness and other elements.

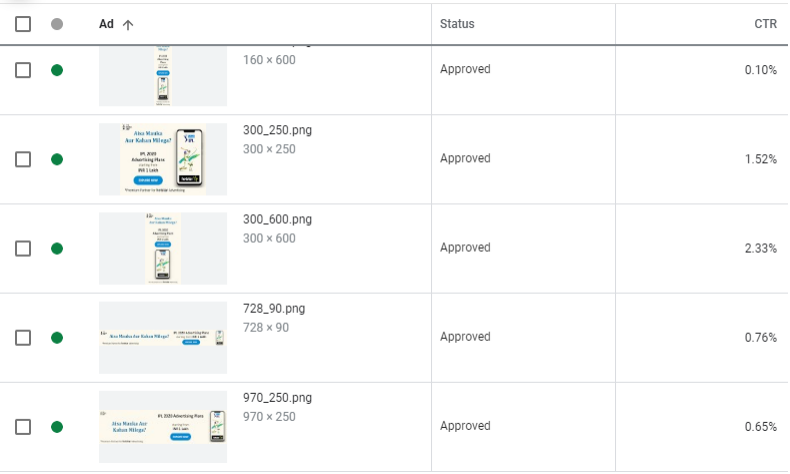

The images below show 3rd ad is the best performing ad among all others.

At Larry’s time, these cheap and quick methods to check the ads were available only on Google and Facebook. But today, you can test the same on multiple platforms like Hotstar, LinkedIn and TikTok.

This brings the question, if these tests are so simple, easy and cheap, why not everyone is doing it? Why market research companies like AC Nielsen, Millward Brown and TAM still exist? Like everything else in the world, ad testing using Google and other online platforms have their limitations. First and the biggest of them in the type of medium. Brands planning to run the campaign that will be predominantly offline might suffer from online bias. Online, there is one to one interaction with the brand which is not available offline. Because of this, an ad that might deliver well, fail the ad test on YouTube. When someone sees an ad in Cinema or Television, they are more likely to stay through the ad than while watching the same ad on YouTube. The reason being that skipping an ad is almost zero effort online while it requires some effort offline.

The second issue faced by brands is that user profile. If someone wants to test their ad on a profile that is predominantly offline, these tests might not hold good. This is specifically true for brands that are mass with predominantly women target groups and rural reach.

However, there are limitations to this method and where a traditional ad testing method required. The first limitation is for brands that have limited or no online presence. In such cases, it becomes difficult to use a landing page for testing the quality. To circumvent, these issues one can create a temporary landing page but the limitation still exists. Another limitation is that these tests are not diagnostic in nature. They tell you which one is good and which one is not. But they don’t tell you why. Online testing of the ad will not give you a set of learnings that can get replicated across. For this, you still need a set of traditional market research methods like Focus Group, Quantitative testing, and In-Depth interviews.

While Larry can take you this far, the market research agencies listed on the 12th Cross can do the rest. Check out the list of Market Research agencies on the 12th Cross.

Leave a Reply